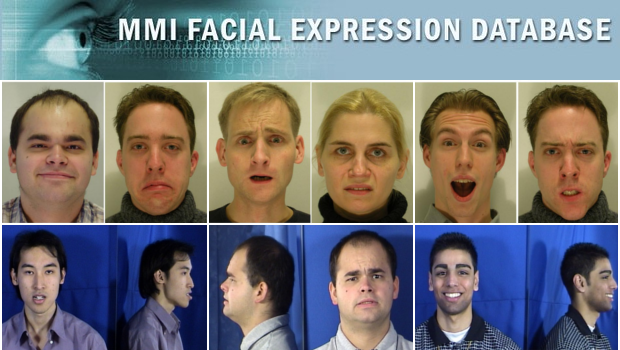

MMI Facial Expression Database

The MMI Facial Expression Database is an ongoing project, that aims to deliver large volumes of visual data of facial expressions to the facial expression analysis community.

A major issue hindering new developments in the area of Automatic Human Behaviour Analysis in general, and affect recognition in particular, is the lack of databases with displays of behaviour and affect. To address this problem, the MMI-Facial Expression database was conceived in 2002 as a resource for building and evaluating facial expression recognition algorithms. The database addresses a number of key omissions in other databases of facial expressions. In particular, it contains recordings of the full temporal pattern of a facial expressions, from Neutral, through a series of onset, apex, and offset phases and back again to a neutral face.

Secondly, whereas other databases focused on expressions of the six basic emotions, the MMI Facial Expression Database contains both these prototypical expressions and expressions with a single FACS Action Unit (AU) activated, for all existing AUs and many other Action Descriptors. Recently recordings of naturalistic expressions have been added too.

The database consists of over 2900 videos and high-resolution still images of 75 subjects. It is fully annotated for the presence of AUs in videos (event coding), and partially coded on frame-level, indicating for each frame whether an AU is in either the neutral, onset, apex or offset phase. A small part was annotated for audio-visual laughters. The database is freely available to the scientific community.

More information about the database can be found here and here.

Statistics and details about the annotations can be found on the "About" page.